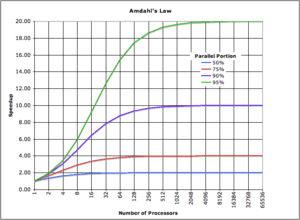

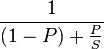

Perhaps the most important lesson I've learned while studying computer science is that of Amdahl's law.

Amdahl's law is generally used to predict the maximum speedup by improving a single component of a system (say, a function or a database). But the implications are simple: Improve the thing that will actually help.

As programmers, however, we would rather contemplate just what is the fastest way to concatenate a string. Or whether PHP is much faster than Ruby. And just how much more traffic you can handle with 5 or 10 fcgi workers. And so on. The internet is riddled with these questions. And let's not forget the age old debate of speed improvements by using raw SQL instead of an ORM.

Once upon a time I even wrote a web framework where I made sure to always use the fastest pattern of doing X in PHP. I knew databases were slow, so I did a lot of the work regarding JOINs and such in PHP.

Yeah.

Optimizing where it matters

The other day I finally assembled all the bits and pieces for an evolutionary algorithm in Haskell.

I'm trying to print Hello World by performing random changes on a population of strings - eventually I want to create an extensible framework for evolutionary algorithms that will let me write poetry programmatically.

It took 27 seconds to go 5 epochs. Just five generations.

INIT time 0.00s ( 0.00s elapsed)

MUT time 26.47s ( 27.00s elapsed)

GC time 0.62s ( 0.62s elapsed)

EXIT time 0.00s ( 0.00s elapsed)

Total time 27.08s ( 27.62s elapsed)

%GC time 2.3% (2.2% elapsed)

Productivity 97.7% of total user, 95.8% of total elapsed

Okay, it's definitely not a problem with memory access. 97.7% of the time is spent in computation, this is good, but slightly worrying. Let's do some profiling!

COST CENTRE MODULE %time %alloc

levenshtein Evaluators.Basic 91.5 100.0

levenshtein.d Evaluators.Basic 8.5 0.0

The levenshtein distance function I implemented costs 100% of computation time!

After replacing my function with the implementation suggested by Reddit life instantly became much easier. It now takes just 0.03 seconds to compute 5 epochs of the algorithm.

27 seconds -> 0.03 seconds by changing a single function.

The problem I have now is anything larger than ~25 epochs makes my computer decide something funny is going and kill the program, which says I'm doing something terrible with memory.

Then again, there are 480195 population members at the 25th epoch ... I probably don't need that many.

By the way, it's still not a memory problem per se (for 20 epochs):

Total time 10.72s ( 10.80s elapsed)

%GC time 23.4% (23.3% elapsed)

Alloc rate 2,736,341,212 bytes per MUT second

Productivity 76.6% of total user, 76.0% of total elapsed

-----

COST CENTRE MODULE %time %alloc

lev'''.lev Evaluators.Basic 61.5 58.8

lev'''.levMemo Evaluators.Basic 17.3 31.4

breedTwo Operators.Basic 2.8 2.4

breedTwo.(...) Operators.Basic 2.0 0.6

breedTwo.(...) Operators.Basic 1.3 0.6

breedTwo.(...) Operators.Basic 1.3 0.6

select.\ Selectors.Basic 1.1 0.3

lev'''.xa Evaluators.Basic 1.1 0.6

Continue reading about Amdahl's law in action - 27s to 0.03s by changing a function

Semantically similar articles hand-picked by GPT-4

- Some life advice I learned from computer science

- Haskell and randomness

- Learning me a Haskell

- Livecoding #38 - A faux AI that writes JavaScript

- I've hit a glass ceiling of coding productivity. Now what?

Learned something new?

Read more Software Engineering Lessons from Production

I write articles with real insight into the career and skills of a modern software engineer. "Raw and honest from the heart!" as one reader described them. Fueled by lessons learned over 20 years of building production code for side-projects, small businesses, and hyper growth startups. Both successful and not.

Subscribe below 👇

Software Engineering Lessons from Production

Join Swizec's Newsletter and get insightful emails 💌 on mindsets, tactics, and technical skills for your career. Real lessons from building production software. No bullshit.

"Man, love your simple writing! Yours is the only newsletter I open and only blog that I give a fuck to read & scroll till the end. And wow always take away lessons with me. Inspiring! And very relatable. 👌"

Have a burning question that you think I can answer? Hit me up on twitter and I'll do my best.

Who am I and who do I help? I'm Swizec Teller and I turn coders into engineers with "Raw and honest from the heart!" writing. No bullshit. Real insights into the career and skills of a modern software engineer.

Want to become a true senior engineer? Take ownership, have autonomy, and be a force multiplier on your team. The Senior Engineer Mindset ebook can help 👉 swizec.com/senior-mindset. These are the shifts in mindset that unlocked my career.

Curious about Serverless and the modern backend? Check out Serverless Handbook, for frontend engineers 👉 ServerlessHandbook.dev

Want to Stop copy pasting D3 examples and create data visualizations of your own? Learn how to build scalable dataviz React components your whole team can understand with React for Data Visualization

Want to get my best emails on JavaScript, React, Serverless, Fullstack Web, or Indie Hacking? Check out swizec.com/collections

Did someone amazing share this letter with you? Wonderful! You can sign up for my weekly letters for software engineers on their path to greatness, here: swizec.com/blog

Want to brush up on your modern JavaScript syntax? Check out my interactive cheatsheet: es6cheatsheet.com

By the way, just in case no one has told you it yet today: I love and appreciate you for who you are ❤️