[This post is part of an ongoing challenge to understand 52 papers in 52 weeks. You can read previous entries, here, or subscribe to be notified of new posts by email]

Have you ever wondered what it takes tell which event happened before which in a distributed system?

Neither have I, but it's a problem Leslie Lamport solved in 1978 with his paper Time, Clocks, and the Ordering of Events in a Distributed System. The paper even won the Dijkstra award in 2000.

The problem

Our lives revolve around the notion that events have an inherent ordering. That some events happen before other events. An airline ticket booking should only be granted if it happens before the flight departs. It's 3:15 if the clock doesn't read 3:16 yet and so on.

But in a distributed system the idea of "happened before" fast becomes hazy. When you have spatially separated processes that can only communicate through messages, they can't tell what in the system happened before something else.

We usually look at the clock to decide whether a happened before b, but a well defined system can only rely only on information within the system. That's why Lamport defined "happened before" without using real clocks.

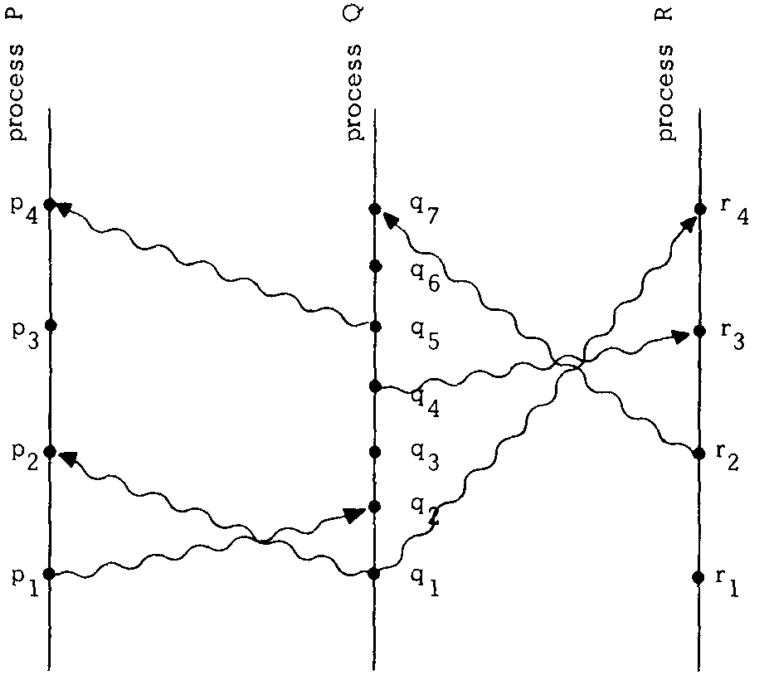

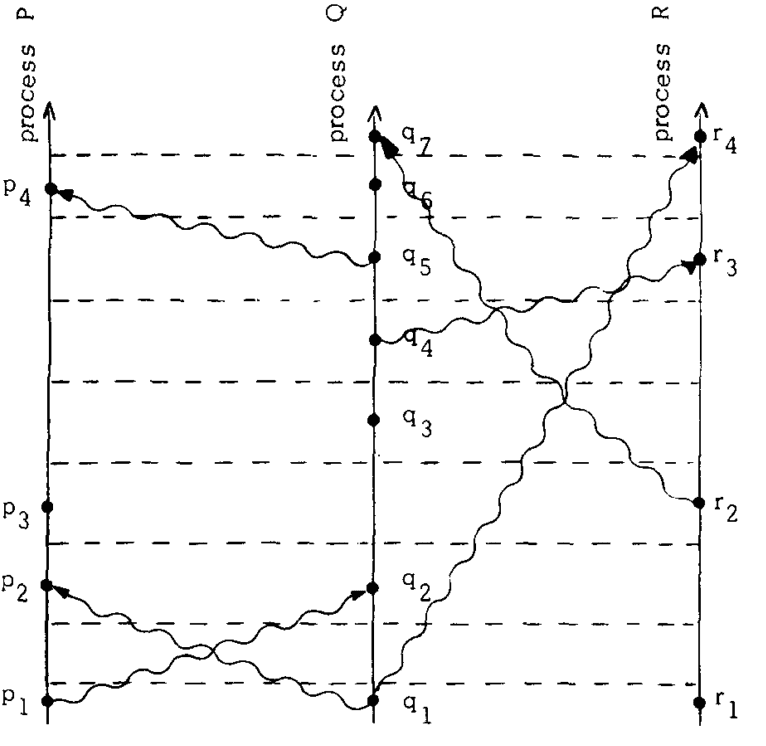

In the image, each line represents a process, a dot is an event and a squiggly line is a message being sent between two processes. Sending a message and receiving a message are both events as well.

Time as we think of it goes from bottom to top, but isn't strictly defined. In this picture you can't compare relative positions of dots in separate processes to determine order.

The relation "happened before", or → for short, between a set of events is the smallest relation that satisfies three rules:

- If a and b are in the same process and a comes before b, then a → b

- If a is sending a message and b is receiving it, then a → b

- If a → b and b → c, then a → c

We assume events can't happen before themselves because that doesn't make sense in our physical reality (although it could in others, but it'd break a bunch of other stuff as well).

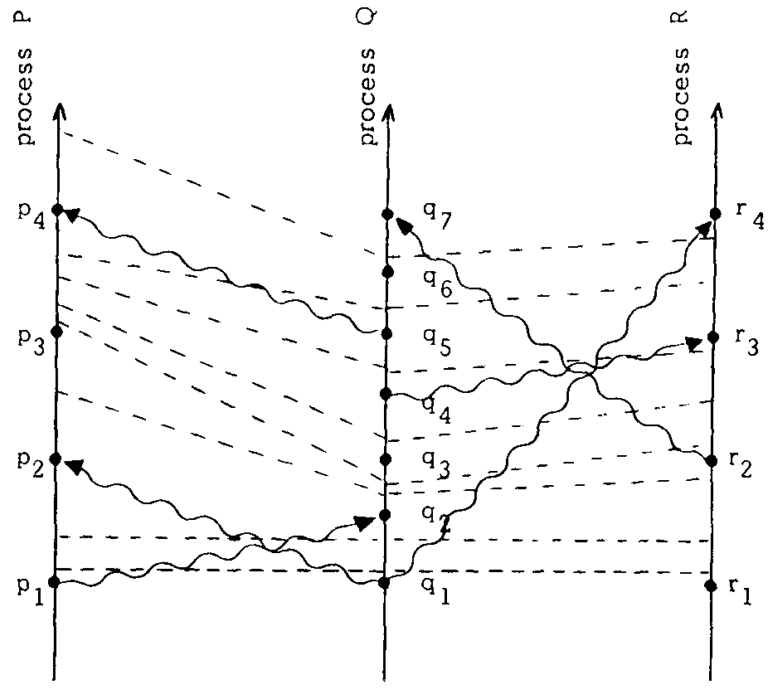

Using these rules, you can follow the progress of time by following process and message lines. If you draw time borders with a dashed line, you'll get a diagram like this:

You can look at → through the lense of causality: a happens before b, if it can affect b. In this case, process P at time p3, can't know what Q is doing in q3 until the message from q5 is received in p4. It can only guess what Q intends to do.

This goes right at the gist of our problem: it is not immediately obvious how one can put events in correct order without an external observer.

Logical clocks solution

To elegantly solve the problem Lamport introduces logical clocks, now known as Lamport timestamps. Simple counters that assign numbers to events.

Every process Pi is going to have its own clock function Ci that assigns a number Ci(a) to any event in that process. We assume these clocks aren't tied to the physical concept of time, so all it takes to make this system of clocks correct are a few rules.

- If a and b are events in Pi and a comes before b, then Ci(a) < Ci(b)

- If a is sending a message from Pi to b in Pj, then Ci(a) < Cj(b)

You'll notice these two rules are essentially saying that if a → b (a happened before b), then C(a) < C(b) (its timestamp is lower than b's). This is called the Clock Condition.

It doesn't take much to create a system of clocks that satisfies these rules. And that's just what Lamport's timestamp algorithm is, simple.

- A process Pi advances its clock Ci between successive events

- Every message must contain a timestamp Tm of when it was sent and when a process receives the message, it advances its clock to be greater than Tm

And that's it.

A system of clocks that ensures you can always tell the order of two events in the system. When two events can't influence each other through messages they are considered concurrent despite their ordering in physical time.

We can now use these timestamps to order all events in the system using an arbitrary ordering when timestamps are ambiguous. It's important to note that while the partial ordering from before is unique, the total ordering depends on clock choice and the arbitrariness of ordering events where no causal link exists.

Lamport then goes on to describe how to use clocks to design a system that can organise itself without relying on external data to order events. But this part isn't so interesting, and you can figure out your own example well enough.

The important part is that a distributed algorithm can execute the events in its state machine in the correct order, because each process is meticulous about updating the timestamps that define event and message ordering.

Anomalous behaviour

Despite our best efforts, the system can have anomalies where events are ordered differently than a user expects.

Let's say we have a global system of connected computers (not unlike the internet, but the best Lamport had at the time was ARPAnet) and a asks computer A to fulfill request R(A). Then they immediately call computer B with the request R(B).

As far as the user is concerned, A happened before B and should be fulfilled first as well. But because the events aren't causally connected, the system has no way of knowing. It might give B a lower timestamp than A.

One solution is to require the user to manually resolve anomalies by appending timestamps to requests. We don't want that.

A better solution is to introduce the Strong Clock Condition. The paper feels fuzzy here, because the only difference between the Strong Clock Condition and the Clock Condition is that the strong version's → symbol is a bold version of the normal → symbol.

But Lamport promises that due to how special relativity works, it is possible to use physical clocks that stay in sync regardless of time-wimey things.

Adding physical clocks

Adding physical clocks to the mix looks simple. Just replace the logical timestamps from before with readings from a clock that measures physical time.

The problem, however, is that you can never have a perfect clock and without synchronization clocks in separate processes are going to drift further and further apart until the system becomes useless.

Physical clocks must satisfy two conditions:

- There exists a constant k << 1 such that for all i: |dCi(t)/dt -1| < k

- For all i, j: |Ci(t) - Cj(t)| < ε

That is, the derivative of a clock's period must always be smaller than a very small constant (the clock is precise) and the absolute difference between any two clocks must always be smaller than ε (the clocks are synchronised).

Because no two clocks will ever run at the same rate on their own, we need an algorithm that synchronises a process' clock with other clocks in the system. We derive it from the logical clocks algorithm, which means that a process never needs to know the state of any clocks other than its own and message timestamps.

As always in this paper, we follow two rules:

- For every i, if Pi does not receive a message at t, then Ci is differentiable at t and dCi(t)/dt > 0

- A message m sent from Pi at t contains a timestamp Tm = Ci(t). When receiving said message at t', Pj sets Cj(t') to the maximum (Cj(t' - 0), Tm + μm)

Essentially the same as before, but with more symbols. Between every two events in a process, the clock's readout is differentiable and bigger than 0 (time passes continuously). When a message is received, the process sets its clock to the maximum of its own clock and the timestamp in the message plus a known delay of receiving the message.

This ensures that the whole system stays in sync at all times and because we are now using physical clocks that run regardless of what's going on, we have removed the anomalous behaviour from before.

Wonderful.

Fin

Lamport concludes that he has shown that "happening before" is merely a partial ordering in the system, but can be extended to a somewhat arbitrary total ordering. He also showed that a logical system and an external observer can disagree about the order of events, which he proposes to fix with physical clocks.

He also gave a theorem about the lower bound for how long it can take for clocks to become synchronised, but the maths was completely impenetrable to me, so I can't really get into the appendix part of the paper. Better luck next time.

My favourite part of the paper was its simplicity and relative obviousness. Now that I've read it, the paper makes complete sense and everything is just "d'oh", but coming up with these solutions from scratch required some serious insight.

No wonder this is one of the most cited papers in computer science.

Continue reading about Week 7: Time, Clocks, and Ordering of Events in a Distributed System

Semantically similar articles hand-picked by GPT-4

- Week 19: Relativity theory and time perception

- Week 11, Searching the Internet for evidence of time travelers

- Week 1: Turing's On computable numbers

- The problem with threads

- Why don't computers understand timezones?

Learned something new?

Read more Software Engineering Lessons from Production

I write articles with real insight into the career and skills of a modern software engineer. "Raw and honest from the heart!" as one reader described them. Fueled by lessons learned over 20 years of building production code for side-projects, small businesses, and hyper growth startups. Both successful and not.

Subscribe below 👇

Software Engineering Lessons from Production

Join Swizec's Newsletter and get insightful emails 💌 on mindsets, tactics, and technical skills for your career. Real lessons from building production software. No bullshit.

"Man, love your simple writing! Yours is the only newsletter I open and only blog that I give a fuck to read & scroll till the end. And wow always take away lessons with me. Inspiring! And very relatable. 👌"

Have a burning question that you think I can answer? Hit me up on twitter and I'll do my best.

Who am I and who do I help? I'm Swizec Teller and I turn coders into engineers with "Raw and honest from the heart!" writing. No bullshit. Real insights into the career and skills of a modern software engineer.

Want to become a true senior engineer? Take ownership, have autonomy, and be a force multiplier on your team. The Senior Engineer Mindset ebook can help 👉 swizec.com/senior-mindset. These are the shifts in mindset that unlocked my career.

Curious about Serverless and the modern backend? Check out Serverless Handbook, for frontend engineers 👉 ServerlessHandbook.dev

Want to Stop copy pasting D3 examples and create data visualizations of your own? Learn how to build scalable dataviz React components your whole team can understand with React for Data Visualization

Want to get my best emails on JavaScript, React, Serverless, Fullstack Web, or Indie Hacking? Check out swizec.com/collections

Did someone amazing share this letter with you? Wonderful! You can sign up for my weekly letters for software engineers on their path to greatness, here: swizec.com/blog

Want to brush up on your modern JavaScript syntax? Check out my interactive cheatsheet: es6cheatsheet.com

By the way, just in case no one has told you it yet today: I love and appreciate you for who you are ❤️