You know how most programmers find functional coding to be ever so slightly mind bending and how it's somewhat difficult to wrap one's head around working with variables whose state you cannot change and lazy evaluations and all manner of odd things?

The thing I've had most trouble with and still do actually, is coding in a functionally clean manner, using more recursion, cleaner abstractions and so on. Just as I thought I was almost starting to get kind of good at this, a bunch of people proved me wrong when I crowdsourced some elegance.

Yeah, some people are really good at this functional stuff.

And then one day ml-class introduced me to mathematical programming with Octave. Sure, I've done some Octave before at school, but that was just enough to get my feet wet - basic syntax and stuff. Or maybe I just paying enough attention to really grasp the awesome things I was being shown.

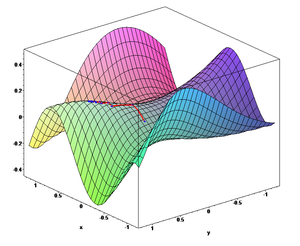

Either way, I feel as if over the past two weeks, doing machine learning homework in Octave has opened a whole new world of striving for elegance and purity in my code. If I thought functional was mindbending, this stuff is ripping my face off.

Apparently when you take a naive loop and make it into something beautiful it's called vectorization in this field. The interesting bit here is that all you really need to perform optimization of epic proportions is some math fu, no translating the problem into something else, no looking at it from five different perspectives ... just maths and then some.

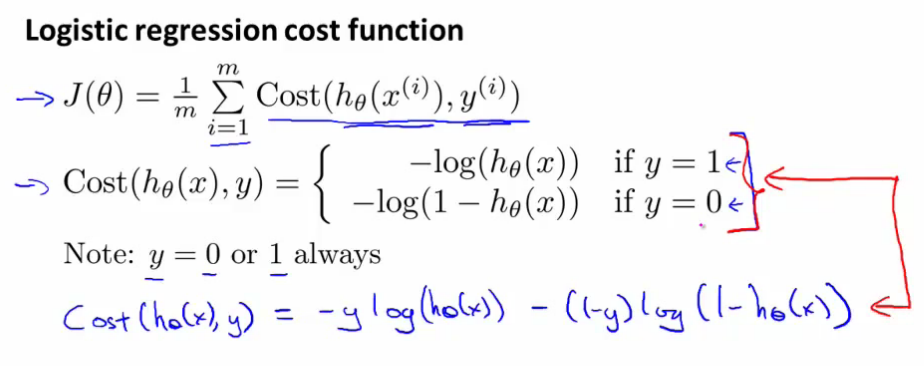

Using the gradient descent algorithm for logistic regression as an example, in particular calculating the cost function:

Although I think this might have been exactly the perfect example for the code below ... it's difficult to search through videos for this stuff.

The naive approach could be something like this (didn't actually run the code):

J = 0;

for i = 1:m

J += =y(i)*log(sigmoid(theta*X(i,:))-(1-y(i)*log(1-sigmoid(theta*X(i,:));

end

J = J/m;

for j = 1:size(theta)

grad(j) = 0;

for i = 1:m

grad(j) += (sigmoid(theta*X(i,:))-y(i))*X(i,j);

end

grad(j) = grad(j)/m;

end

But all those lops aren't really necessary, all they are basically doing is matrix multiplication, which gives us a nice way to vectorize the whole thing:

J = (1/m)*sum(-y'*log(sigmoid(X*theta))-(1-y')*log(1-sigmoid(X*theta)));

grad = (1/m)*(sigmoid(X*theta)-y)'*X;

The difference in elegance absolutely blows my mind and I can't wait to see what other wonders I discover through this Octave thing in the course of this semester.

Pretty much all my octave can be found in the ml-class-homework repository. But I'm sure I'll end up modeling more algorithms in this thing.

Continue reading about First steps with Octave and machine learning

Semantically similar articles hand-picked by GPT-4

- I suck at implementing neural networks in octave

- Sabbatical week day 2: I fail at Octave

- My brain can't handle OOP anymore

- I learned two things today 29.8.

- Sabbatical week day 3: Raining datatypes

Learned something new?

Read more Software Engineering Lessons from Production

I write articles with real insight into the career and skills of a modern software engineer. "Raw and honest from the heart!" as one reader described them. Fueled by lessons learned over 20 years of building production code for side-projects, small businesses, and hyper growth startups. Both successful and not.

Subscribe below 👇

Software Engineering Lessons from Production

Join Swizec's Newsletter and get insightful emails 💌 on mindsets, tactics, and technical skills for your career. Real lessons from building production software. No bullshit.

"Man, love your simple writing! Yours is the only newsletter I open and only blog that I give a fuck to read & scroll till the end. And wow always take away lessons with me. Inspiring! And very relatable. 👌"

Have a burning question that you think I can answer? Hit me up on twitter and I'll do my best.

Who am I and who do I help? I'm Swizec Teller and I turn coders into engineers with "Raw and honest from the heart!" writing. No bullshit. Real insights into the career and skills of a modern software engineer.

Want to become a true senior engineer? Take ownership, have autonomy, and be a force multiplier on your team. The Senior Engineer Mindset ebook can help 👉 swizec.com/senior-mindset. These are the shifts in mindset that unlocked my career.

Curious about Serverless and the modern backend? Check out Serverless Handbook, for frontend engineers 👉 ServerlessHandbook.dev

Want to Stop copy pasting D3 examples and create data visualizations of your own? Learn how to build scalable dataviz React components your whole team can understand with React for Data Visualization

Want to get my best emails on JavaScript, React, Serverless, Fullstack Web, or Indie Hacking? Check out swizec.com/collections

Did someone amazing share this letter with you? Wonderful! You can sign up for my weekly letters for software engineers on their path to greatness, here: swizec.com/blog

Want to brush up on your modern JavaScript syntax? Check out my interactive cheatsheet: es6cheatsheet.com

By the way, just in case no one has told you it yet today: I love and appreciate you for who you are ❤️