I’m taking a sabbatical week over the holidays. This week’s posts will serve as a sort of report of what I got up to the previous day instead of the usual schedule – wish me luck that I achieve even half of what I’d like to.

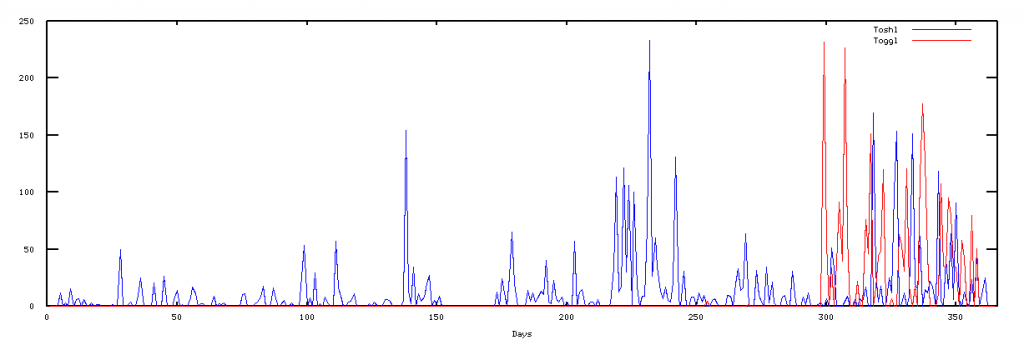

After I managed to get the toggl and toshl datasets on Monday it was time to do something useful with them yesterday. Turns out, I'm not very good at doing useful things with datasets because my biggest achievement of the day was coming up with a plot of the data.

You know that all awesome data format that is JSON? Every programming language except Java has a nice and easy interface for loading and saving right into native data structures. This makes it perfect and all 'round awesome! So it seemed only natural that my node.js scripts for fetching data would be storing it in JSON for future use.

Or so I thought.

If there is one thing I learned in ml-class it's that one should always take some time to first model their machine learning algorithm in a mathematical language like matlab/octave before implementing in a production-like language. Something about how all those matrix operations are easier and how having a language created especially for the task makes it all that easier to play around.

I guess octave is to machine learning as InDesign or Illustrator are to web design?

Turns out not only doesn't Octave have a native way of reading JSON, but even when you find a library it is impossible to say Here is a file, make it a string yo! Just doesn't work. All files need to have a format or something ... it's really quite silly.

Luckily there was a simple solution - just dump the data as a column of numbers and Octave couldn't have been happier about it.

As mentioned, I didn't get very far, this graph is the extent of my achievements yesterday:

Just for fun I tried running linear regression on this data and, as expected, it failed horribly. The lowest cost is a function along the lines of y = **-6.5541e+88*x + **-4.8840e+90 ... I'm not even sure coming up with fake-ish quadratic and cubic function elements would do much good in this case and since I only have a single parameter neural networks wouldn't do much good either.

And either way, anything that comes even close to modeling this data will suffer from horrible overfitting and won't be much use anyway ... luckily I have some other ideas I can try.

Continue reading about Sabbatical week day 2: I fail at Octave

Semantically similar articles hand-picked by GPT-4

- Sabbatical week day 1: Toshl and Toggl datasets

- Sabbatical week day 3: Raining datatypes

- First steps with Octave and machine learning

- My old code is atrocious

- Livecoding recap #42: HackerNews app where people are nice

Learned something new?

Read more Software Engineering Lessons from Production

I write articles with real insight into the career and skills of a modern software engineer. "Raw and honest from the heart!" as one reader described them. Fueled by lessons learned over 20 years of building production code for side-projects, small businesses, and hyper growth startups. Both successful and not.

Subscribe below 👇

Software Engineering Lessons from Production

Join Swizec's Newsletter and get insightful emails 💌 on mindsets, tactics, and technical skills for your career. Real lessons from building production software. No bullshit.

"Man, love your simple writing! Yours is the only newsletter I open and only blog that I give a fuck to read & scroll till the end. And wow always take away lessons with me. Inspiring! And very relatable. 👌"

Have a burning question that you think I can answer? Hit me up on twitter and I'll do my best.

Who am I and who do I help? I'm Swizec Teller and I turn coders into engineers with "Raw and honest from the heart!" writing. No bullshit. Real insights into the career and skills of a modern software engineer.

Want to become a true senior engineer? Take ownership, have autonomy, and be a force multiplier on your team. The Senior Engineer Mindset ebook can help 👉 swizec.com/senior-mindset. These are the shifts in mindset that unlocked my career.

Curious about Serverless and the modern backend? Check out Serverless Handbook, for frontend engineers 👉 ServerlessHandbook.dev

Want to Stop copy pasting D3 examples and create data visualizations of your own? Learn how to build scalable dataviz React components your whole team can understand with React for Data Visualization

Want to get my best emails on JavaScript, React, Serverless, Fullstack Web, or Indie Hacking? Check out swizec.com/collections

Did someone amazing share this letter with you? Wonderful! You can sign up for my weekly letters for software engineers on their path to greatness, here: swizec.com/blog

Want to brush up on your modern JavaScript syntax? Check out my interactive cheatsheet: es6cheatsheet.com

By the way, just in case no one has told you it yet today: I love and appreciate you for who you are ❤️