Monkey patching is a programming technique popular in the Ruby world and nipped-in-the-bud by the JavaScript Community. Good.

Remember the smoosh controversy? JavaScript couldn't adopt Array.flatten because MooTools used it in the 2010's and making a new one might break the web. That was due to monkey patching.

So what's monkey patching anyway?

I'm glad you asked

Monkey Patching

the term monkey patch only refers to dynamic modifications of a class or module at runtime, motivated by the intent to patch existing third-party code as a workaround to a bug or feature which does not act as desired

You can think of monkey patching as a magic trick. Run a function and it changes how other functions behave without changing the source code.

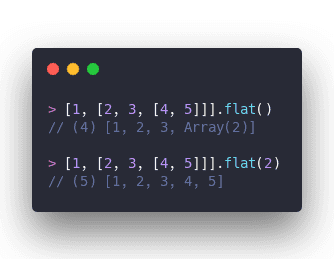

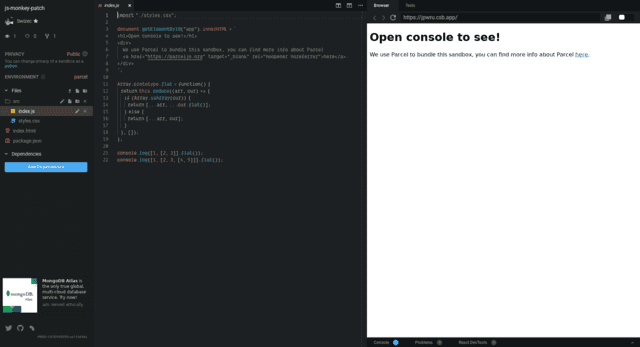

Let's take .flat for example. It recursively flattens an array.

Now let's say you disagree with the levels of recursion argument. You want .flat to always completely flatten an array.

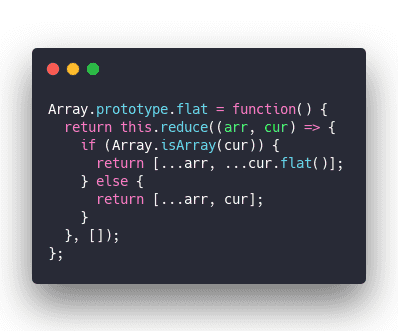

You can overwrite JavaScript's native .flat implementation. Run this somewhere, anywhere, in your codebase.

Overwrite Array.prototype.flat and replace it with a function of your own.

.reduce the array and use ... to combine values. When the current value is an array, go into recursion, otherwise use the value.

Every array in your codebase now has this function. 🧙♂️

Here's a CodeSandbox to prove it works

When monkey patching goes rogue

Monkey patching on its own ain't bad at all. It's a great tool when used responsibly. You can make non-standard methods easy to use, add to your language's standard library, and even fix bugs.

Polyfills are an example of successful monkey patching in the JavaScript world. Adding features to browsers that otherwise don't have them.

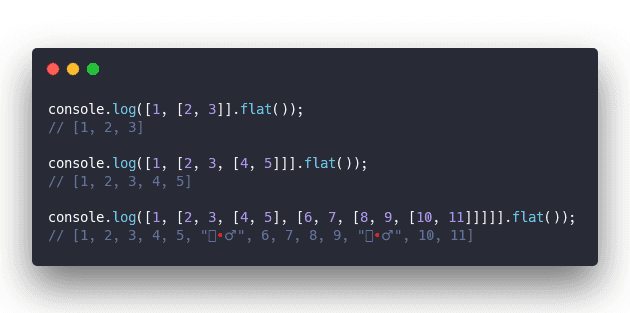

But we can take that .flat example from before and make it sinister.

Whoa where'd that wizard come from?

Some unscrupulous programmer monkey patched our .flat method to add a wizard after every 5th element. How dare they play such a trick on us!

And then monkey patching took 2 days off my life

That's what happened to me one fateful day when a feature finally shipped to production after a million rounds of testing. We got it to work, product was happy, QA was happy, PR was happy. Ship it.

boom 💥

I mean the feature worked but ...

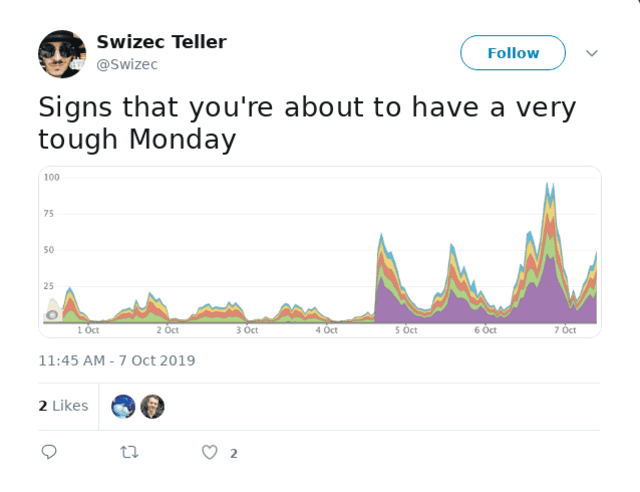

Trouble started on Friday as soon as we deployed. Yes we deployed on Friday.

Our Sentry slack channel started blowing up with ActiveRecord::ConnectioTimeoutError:> could not obtain a database connection within 5.000 seconds errors.

That's a bad error because it means your server couldn't establish a connection to your database. When that happens, nothing works. The request fails and the user cries.

Luckily most errors happened in background processes and users didn't notice. Think we got 1 actual user complaint?

But a 3% error rate every time you do anything is no joke.

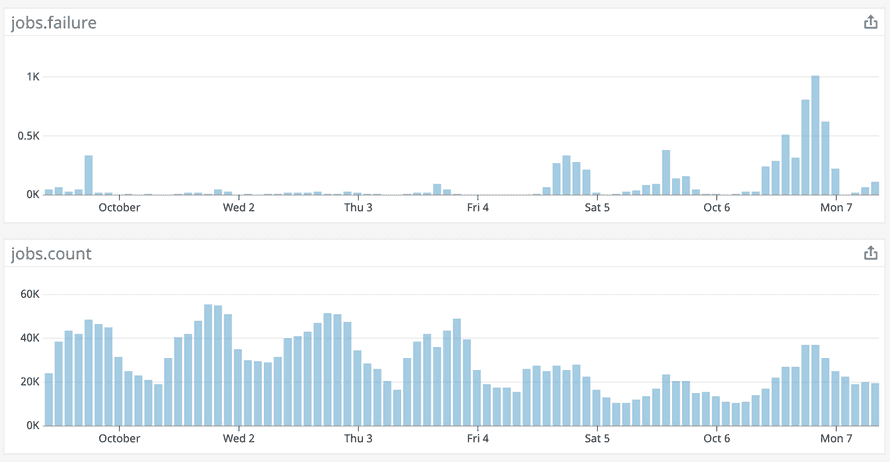

We like to keep jobs.failure at a cool 0.1% or so. Now it shot up to almost 3%. 30 times worse 😬

So what happened?

We couldn't figure it out for the life of us. The database wasn't out of memory, there were plenty of connections left in the connection pool. Both common causes of connection timeouts.

What's worse, our memory and connection usage went down because of the issue. With 3% less load the database was absolutely thriving. She was loving it!

And yet our application was failing.

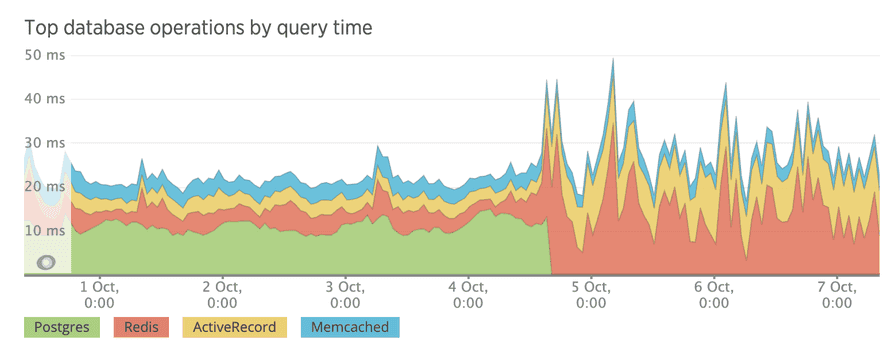

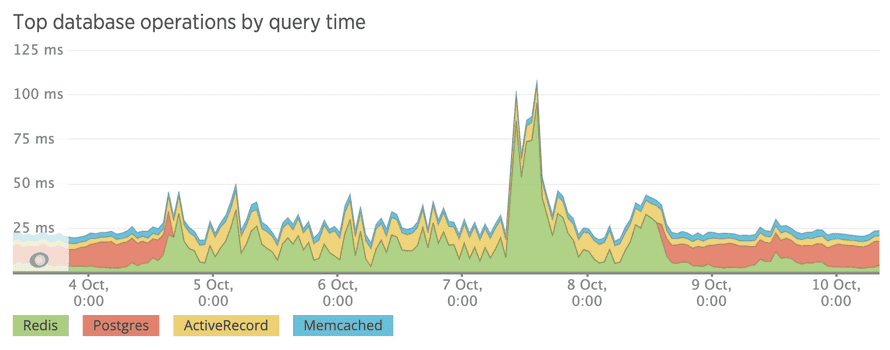

I spent that entire Monday poring through logs, looking at graphs, smashing my face against New Relic graphs, even looked through the entire code diff between now production and old production.

Nothing.

We didn't change how we talk to the database. We didn't add a bunch of background processes competing for resources. We didn't even change any configuration. One of our queries just happened to start taking 10x longer.

it was a coincidence

Graphs don't just change color like that at the exact same timestamp your deploy went through because of a coincidence.

At wit's end we tried a thing.

What if we remove that gem we added? That's the only thing left. Could that library that enables CSV data imports have something to do with this?

It worked. We lost a feature and gained a working system.

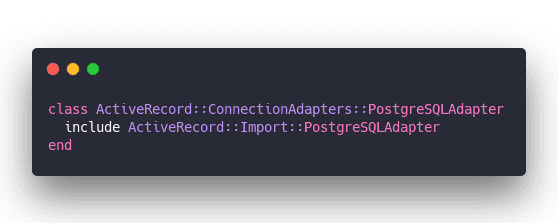

Digging through the gem's codebase we found this in a dependency.

and this ...

You know what that means? It means this gem messes with every single Postgres operation in your codebase.

Every single one of them. They go through this gem whether you like it or not.

And that's how I lost 2 days of my life that I'm never getting back. A gem monkey patching my database connection.

Cheers,

~Swizec

Continue reading about That time monkey patching took 2 days off my life

Semantically similar articles hand-picked by GPT-4

- Don't neglect your upgrades

- My very own daily WTF

- Sometimes your worst code is your best code

- BrowserStack – a less painful way to test weird browsers

- My old code is atrocious

Learned something new?

Read more Software Engineering Lessons from Production

I write articles with real insight into the career and skills of a modern software engineer. "Raw and honest from the heart!" as one reader described them. Fueled by lessons learned over 20 years of building production code for side-projects, small businesses, and hyper growth startups. Both successful and not.

Subscribe below 👇

Software Engineering Lessons from Production

Join Swizec's Newsletter and get insightful emails 💌 on mindsets, tactics, and technical skills for your career. Real lessons from building production software. No bullshit.

"Man, love your simple writing! Yours is the only newsletter I open and only blog that I give a fuck to read & scroll till the end. And wow always take away lessons with me. Inspiring! And very relatable. 👌"

Have a burning question that you think I can answer? Hit me up on twitter and I'll do my best.

Who am I and who do I help? I'm Swizec Teller and I turn coders into engineers with "Raw and honest from the heart!" writing. No bullshit. Real insights into the career and skills of a modern software engineer.

Want to become a true senior engineer? Take ownership, have autonomy, and be a force multiplier on your team. The Senior Engineer Mindset ebook can help 👉 swizec.com/senior-mindset. These are the shifts in mindset that unlocked my career.

Curious about Serverless and the modern backend? Check out Serverless Handbook, for frontend engineers 👉 ServerlessHandbook.dev

Want to Stop copy pasting D3 examples and create data visualizations of your own? Learn how to build scalable dataviz React components your whole team can understand with React for Data Visualization

Want to get my best emails on JavaScript, React, Serverless, Fullstack Web, or Indie Hacking? Check out swizec.com/collections

Did someone amazing share this letter with you? Wonderful! You can sign up for my weekly letters for software engineers on their path to greatness, here: swizec.com/blog

Want to brush up on your modern JavaScript syntax? Check out my interactive cheatsheet: es6cheatsheet.com

By the way, just in case no one has told you it yet today: I love and appreciate you for who you are ❤️